in search of database unicorns

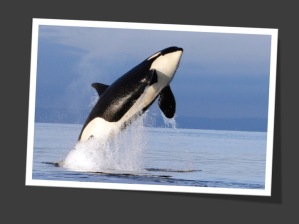

orca now open source

One of the most amazing pieces of database technology was just released into the wild—yes, I’m talking about Orca. And yes, I might be a bit biased having been involved in the project in the past.

Orca is the summary of query optimization research from the past 3 decades, implemented in a way that doesn’t cut many corners. There is still plenty of headroom to add features and functionality, but as you’ll realize very quickly when surveying the code, the architecture lets you slot new development work rapidly and without affecting the existing functionality. That is really the strength of this framework.

Orca is the summary of query optimization research from the past 3 decades, implemented in a way that doesn’t cut many corners. There is still plenty of headroom to add features and functionality, but as you’ll realize very quickly when surveying the code, the architecture lets you slot new development work rapidly and without affecting the existing functionality. That is really the strength of this framework.

For a detailed discussion of the framework, check out the long string of publications ranging from SIGMOD 2009 through VLDB 2015—from the original idea of parallel optimization all the way to rather elegant implementation details around CTE’s and Partitioning.

I want to take a moment and express my gratitude and recognize the people behind the project: Joe Hellerstein, Tim Kordas, Kurt Harriman, Brian Hagenbuch, John Eshleman, and Chuck McDewitt led some of the early rounds of discussions around a new optimizer in Greenplum Database. Siva Narayanan, Subi Arumugam, Chad Whipkey, Kostas Krikellas, Lyublena Antova, Mohamed Soliman, Rhonda Baldwin, Venky Raghavan, Amr El-Helw, Zhongxian Gu, Entong Shen, Joy Kent, George Caragia, Foyzur Rahman, Carlos Garcia and Michalis Petropoulos did the heavy lifting of creating a code base from scratch and building the best query optimizer out there—in the process they valiantly put up with the static code analysis, code coverage, and unit test madness their manager imposed on them. Ravi Shankar, Suchitra Ramani, Deepa Prabhu, and Leo Tung devised and implemented sophisticated test frameworks. Ronaldo Ama provided air cover and never lost faith in the project. Don Haderle served as an external advisor. Scott Yara and Bill Cook supported the enterprise all the way from the early days on—I shall never forget when Bill asked me about the latency of the memory allocator; you wish your CEO was that detail-oriented. Many a team member in Greenplum, EMC, and Pivotal contributed to discussions that made this project a success; my apologies to anybody I may have missed. A heartfelt “Thank You” to all of you.

Lastly, a nod to the skeptics who over all these years constantly questioned the feasibility of the endeavor and declared us crazy just for trying: you challenged us to try harder, think bigger, be more thorough, and, in the end, build to a better product!

And now on to the code…

call me mike

Meeting new customers, prospect and investors over the phone is integral part of being a founder. Unfortunately, with an uncommon name like mine almost every introduction has this awkward moment of “Excuse me, what did you say your name was?”

Reconstructing my name from the various spellings I’ve seen, “Florian Waas” is frequently understood as something like “Fvluohhreeuahnvwwaaohhsz”. This is mostly due to my German accent and–in an attempt to help the conversation along–I’d quickly spell it out. Yet, besides being a cumbersome exercise, it still leaves the other side wondering how to pronounce it.

So, I decided to drop my first name and just go with my middle name, Michael instead. Now introductions are simple: everybody gets “Mike” right away.

So, just call me Mike!

database guy wins Turing Award

Mike Stonebraker is this year’s Turing Award winner!

ACM has chosen a recipient who like few others has shaped the software industry both with his academic contributions as well as his entrepreneurial ambition. Besides honoring an outstanding scholar the award also underlines the relevance of database technology and the renewed interest in what is easily one of the most challenging disciplines in software engineering.

Congrats, Mike Stonebraker!

how Hadoop can disrupt the database industry

Hardly any book has attracted more attention among software companies than Clayton Christensen’s “The Innovator’s Dilemma” and some companies even welcome it as a sign of “innovative spirit” when engineers slap a product manager with it over the head, figuratively that is, whenever he or she presents an idea to increase business values along the lines of the existing corporate strategy.

Hardly any book has attracted more attention among software companies than Clayton Christensen’s “The Innovator’s Dilemma” and some companies even welcome it as a sign of “innovative spirit” when engineers slap a product manager with it over the head, figuratively that is, whenever he or she presents an idea to increase business values along the lines of the existing corporate strategy.

In a nutshell, The Dilemma is the observation that established industries are more likely to invest in existing, proven but aging technologies rather than look for new, well, disruptive but initially risky or economically even outright unattractive innovations. The incumbents will miss the boat and their business model gets disrupted by a competitor who took the plunge. Eventually, the disrupter puts the incumbents out of business.

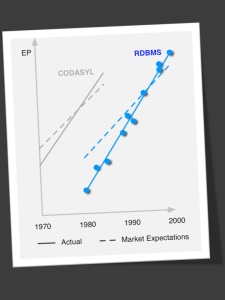

For a technology to disrupt an existing industry a few things must happen:

- The existing products have outgrown the actual market expectations, e.g., deliver more features than appear useful.

- The new technology changes one fundamental parameter in the equation, often at the cost of some other fundamental property like performance, Continue reading

smdb’13 — the votes are in

The reviewing period for this year’s IEEE Workshop on Self-Managing Databases (SMDB) is over and the top papers have been determined! I’m very excited we were able to compile a strong program. As the following Wordle constructed from the abstracts tells you—well, actually, I let you come up with your own interpretation:

Big shout out to the members of the PC who’ve done an outstanding job reviewing a pile of papers in record time! Thank you!

And without further ado, here’s the list: Continue reading

testing the accuracy of query optimizers

A while back I had a very interesting conversation with Jack an application developer for a larger software company in the area; so to speak a person on the other side of the database.

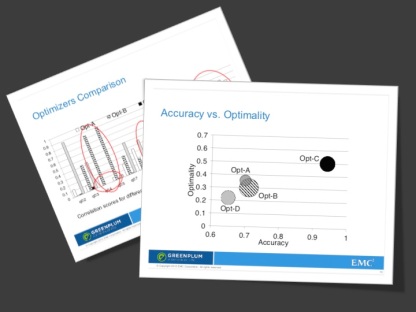

He maintains that database application programmers who have to support a number of database systems have long developed a kind of taxonomy of query optimizers: they know which systems have ‘good’ and which have ‘bad’ optimizers. They know on which systems queries need “tuning” and even to what extent. Apparently, there is at least one system that ‘gets it almost always right’ as Jack would put it, and lots that are way off by default. When asked, however, how he’d quantify these differences Jack simply shrugged: ‘It’s something you develop over time; can’t give you a number.’

We talked about measuring the quality of an optimizer in this post earlier on and I’ve put forward a number of desiderata for an optimizer benchmark then.

big data vs. pundits – 1 : 0

So, after all it wasn’t such a ‘razor tight’ presidential race last night. Not that the Big Data camp expected one in the first place. Nate Silver, the poster child of predictive analytics in the political arena made a pretty convincing case for why Obama was very likely to win; he actually quantified it at around 90% probability. Just for kicks, compare this to Kimberley Strassel at the WSJ who in all earnest made the case for Romney just one day before the election.

In short, independent of the political outcome of this election it’s a yet another great showcase where Big Data wins hands down over punditry!

P.S. if you don’t have a WSJ.com subscription you can also get a copy of Strassel’s—well, what am I going to call it? Article?

socc’12

As expected, being a stand-alone conference made it difficult to attract what I call “casual attendees”. Folks who would be happy to spend a day or two after a big conference but won’t take the time out of their busy schedules for inter-continental travels just to attend this symposium. Consequently, most attendees were either authors themselves or locals happy to spend an extra hour in the morning rush hour to get to San Jose.

Anyways, the conference was well worth the time — even though I’d liked to have seen more database/data management related talks.

One of the highlights was definitely Scott Schenker’s keynote on Software-defined Networks (SDN). Making networking sound interesting and appealing to a larger crowd is a feat. Luckily, Schenker’s immensely talented and able to break down a complex technical subject to a fairly diverse crowd that, let’s face it, is just not that much into networking in the first place. Continue reading

smdb’13 — spread the word

ICDE’s Workshop for Self-Managing Database Systems (SMDB) has long been the venue for all things concerning self-management of databases, however, it’s only with the advent of Big Data and the increasing popularity of hard-to-manage data sizes, data formats, and data fire hoses that self-management finally takes center stage!

ICDE’s Workshop for Self-Managing Database Systems (SMDB) has long been the venue for all things concerning self-management of databases, however, it’s only with the advent of Big Data and the increasing popularity of hard-to-manage data sizes, data formats, and data fire hoses that self-management finally takes center stage!

We’re excited to organize this gathering and calling on all researchers and practitioners to consider submitting novel ideas, war stories, vision and experience papers. In the spirit of a true workshop, we’re looking for work-in-progress and kept the format of submissions to 6 pages.

Submission deadlines are as follows:

11/19/2012 — abstract submission

11/26/2012 — full paper due

Help us spread the word and let your coworkers or students know. Feel free to print the flyer and put it on your office door or your message!

For details visit the workshop website at http://smdb2013.cs.pitt.edu